Artificialanalysis AI Review What Data Leaders Should Know

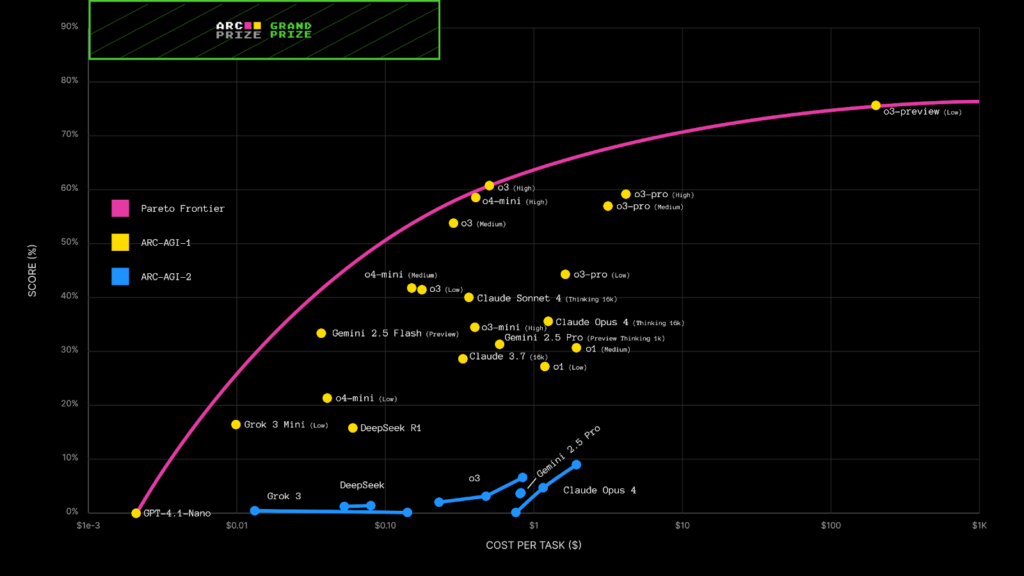

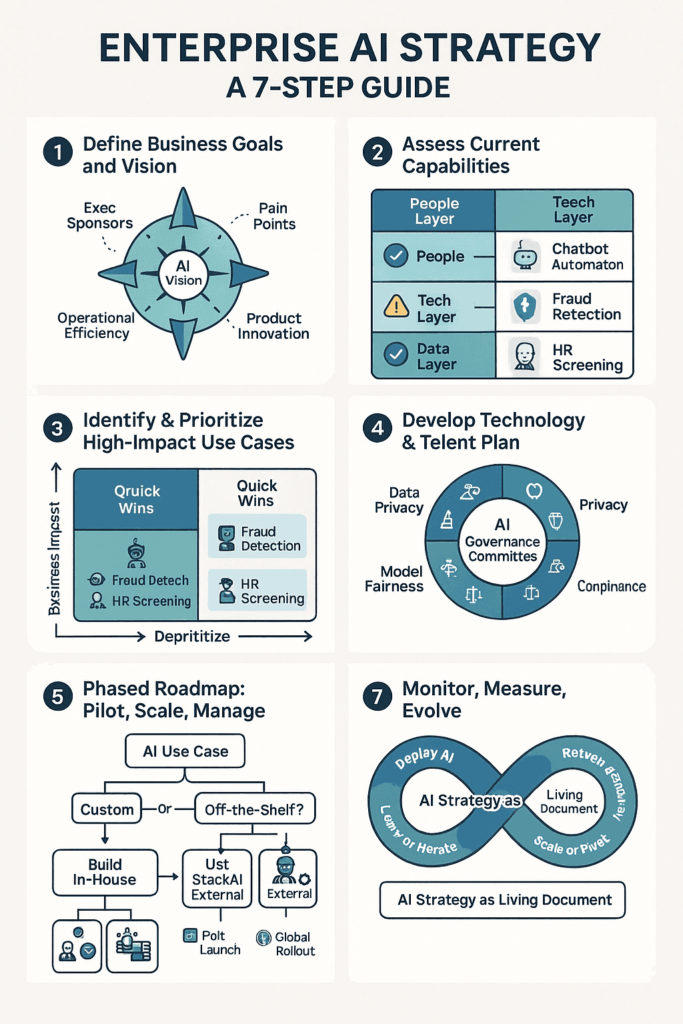

Artificial intelligence models are evolving rapidly. New large language models, multimodal systems, and enterprise AI tools are released frequently. For data leaders, keeping track of performance benchmarks, pricing efficiency, and model quality is increasingly complex.

Choosing the right model for production use is not simply a technical decision. It affects cost structure, latency, compliance posture, and competitive positioning.

This is where artificialanalysis ai becomes relevant.

In this artificialanalysis ai review, we explore how the platform helps data leaders evaluate, benchmark, and compare AI models in a structured way. The focus is on transparency, measurable performance metrics, and informed decision making.

The problem it solves is evaluation overload. With dozens of AI models on the market, enterprises need independent performance insights before committing to integration.

Artificialanalysis ai is designed for chief data officers, AI architects, machine learning teams, and enterprise decision makers responsible for AI adoption strategy.

What Is Artificialanalysis AI

Artificial Analysis is a benchmarking and evaluation platform focused on analyzing and comparing artificial intelligence models. Rather than building models itself, artificialanalysis ai provides structured performance data, cost comparisons, and standardized testing results.

Within the broader SaaS ecosystem, artificialanalysis ai operates in the AI intelligence and benchmarking category. It supports enterprises by offering clarity in a fast moving AI vendor landscape.

For organizations evaluating foundation models, generative AI systems, or enterprise grade LLM deployments, artificialanalysis ai functions as an analytical reference layer.

It provides transparency where marketing claims may otherwise dominate.

How Artificialanalysis AI Works

Understanding how artificialanalysis ai works helps data leaders assess its strategic value.

Step One Model Benchmark Collection

The platform gathers performance data from standardized testing frameworks across multiple AI models.

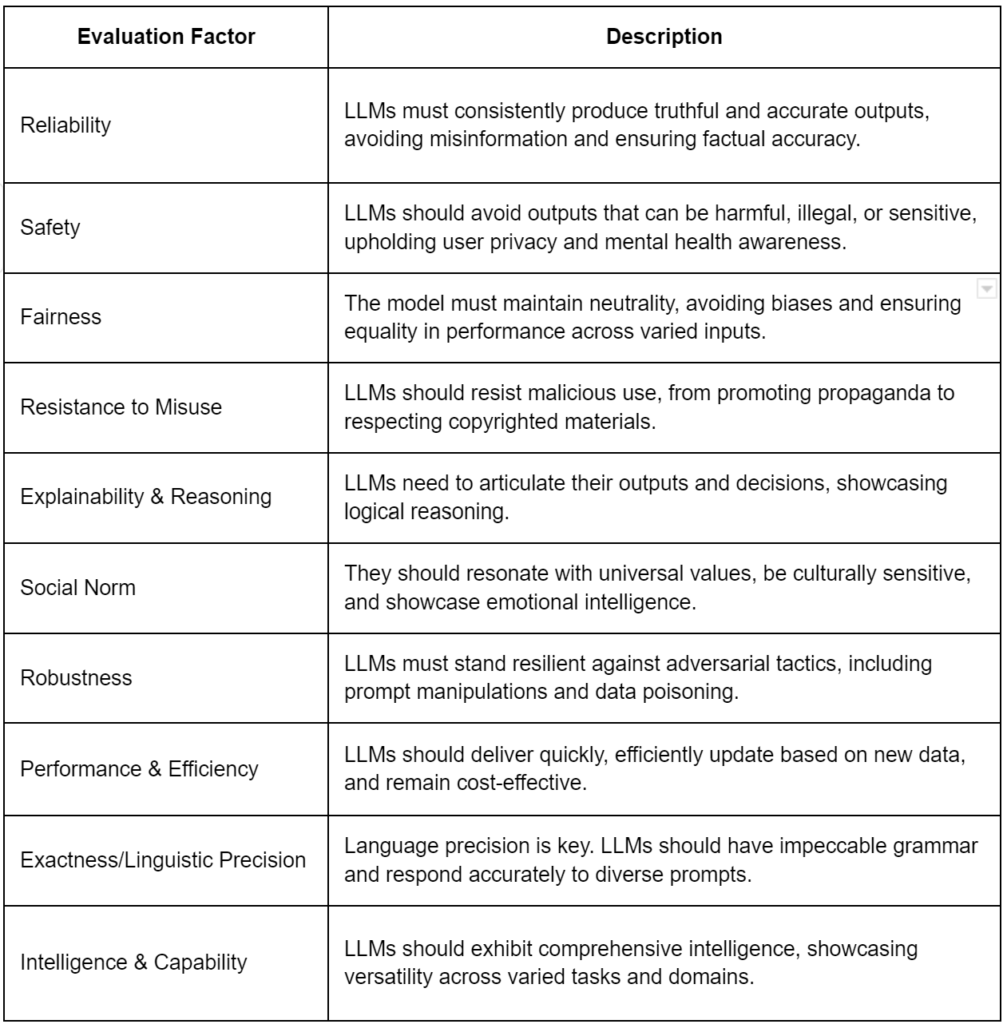

Step Two Metric Normalization

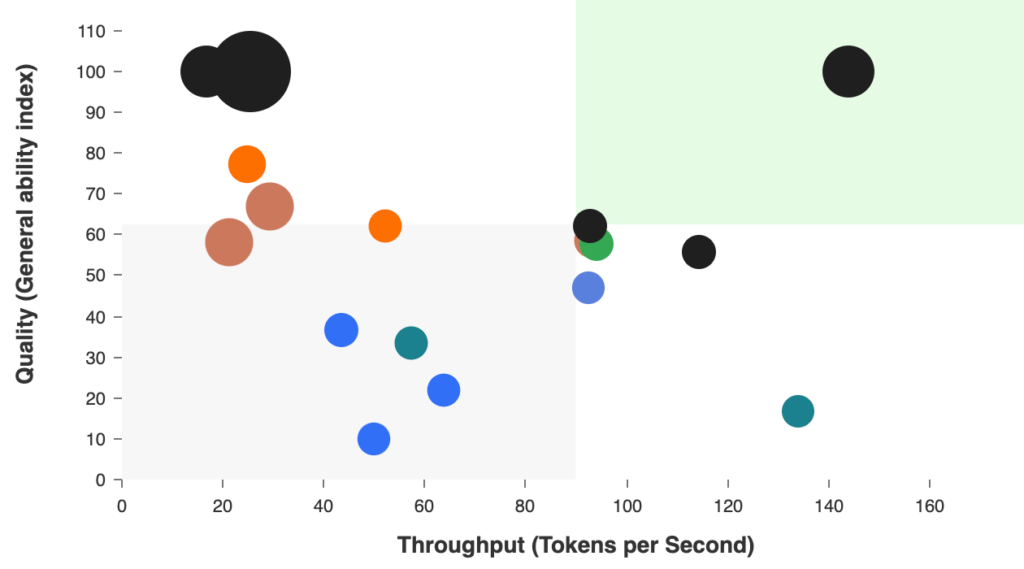

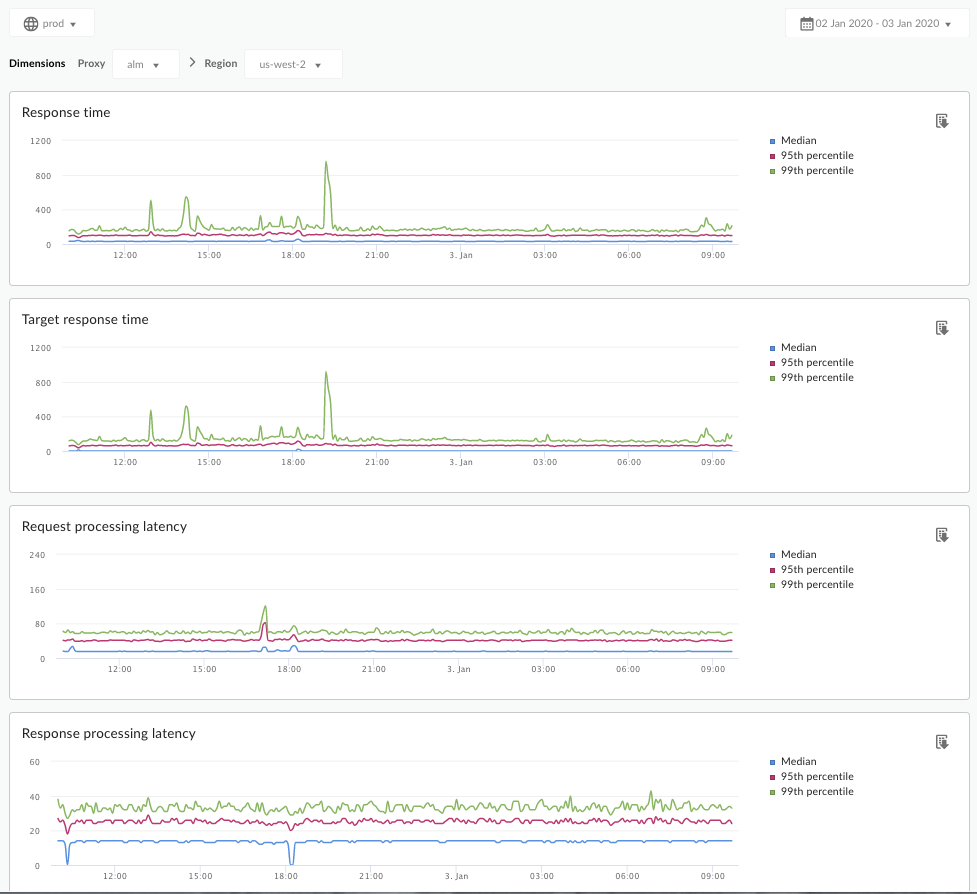

Metrics such as reasoning accuracy, language fluency, coding capability, and cost efficiency are standardized for comparison.

Step Three Comparative Analysis

Users access dashboards that compare models across key dimensions.

Step Four Cost And Performance Insights

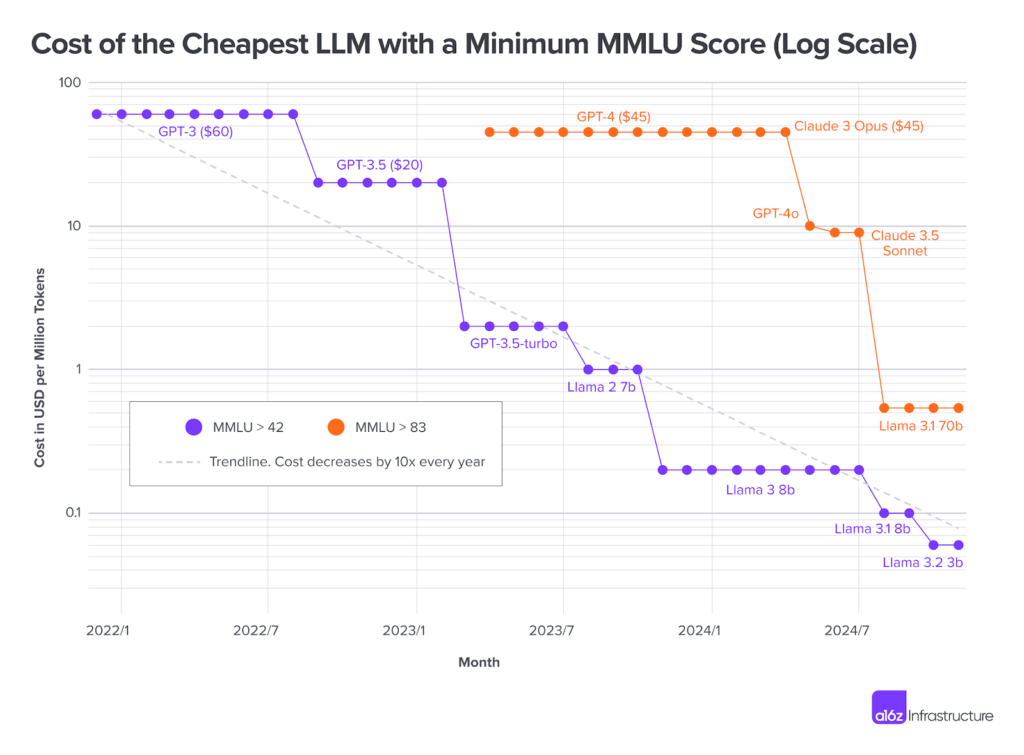

Artificialanalysis ai highlights tradeoffs between performance and operational cost.

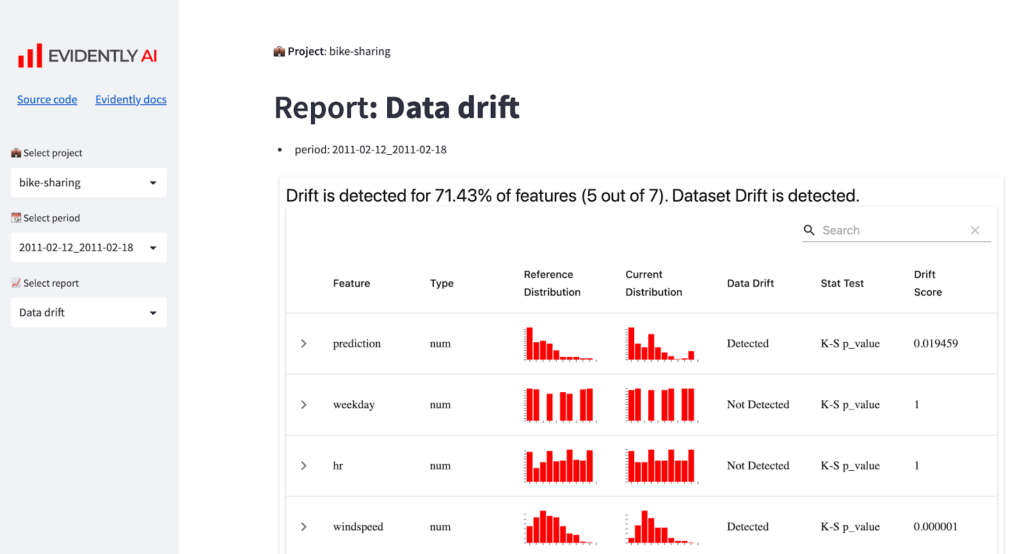

Step Five Continuous Updates

As new models are released, benchmarks are updated to reflect market changes.

The workflow emphasizes clarity and comparability rather than raw experimentation.

Core Features Overview

Artificialanalysis ai offers several core features tailored to enterprise evaluation.

Standardized Model Benchmarks

Performance is measured using consistent evaluation criteria.

Why it matters: Enables apples to apples comparison.

Cost Efficiency Analysis

Operational pricing metrics are integrated into comparisons.

Why it matters: AI deployment costs significantly impact enterprise budgets.

Capability Breakdown

Models are evaluated across tasks such as reasoning, coding, and text generation.

Why it matters: Aligns model choice with specific use cases.

Ongoing Model Tracking

New releases are incorporated into comparison datasets.

Why it matters: Keeps decision makers up to date in a rapidly evolving market.

Each feature supports strategic clarity for AI adoption.

Key Benefits For Users

Data leaders prioritize accuracy and accountability.

Informed Model Selection

Structured comparisons reduce reliance on vendor marketing claims.

Budget Optimization

Cost performance insights help avoid overspending.

Strategic Alignment

Capabilities can be matched to enterprise objectives.

Reduced Evaluation Time

Teams save time otherwise spent running independent benchmarks.

Enhanced Governance

Benchmark transparency supports internal oversight.

The primary benefit is strategic confidence in AI investment decisions.

Who Should Use This Software

Artificialanalysis ai is best suited for enterprise and technical decision makers.

Chief Data Officers

Leaders defining AI strategy benefit from comparative insights.

ML Engineering Teams

Engineers selecting foundation models gain structured evaluation support.

Procurement Teams

Cost comparisons support vendor negotiations.

AI Researchers

Researchers analyzing model performance trends can leverage benchmark data.

Organizations without active AI initiatives may find limited direct value.

Use Cases And Real World Scenarios

Scenario One Enterprise LLM Selection

A large enterprise evaluates multiple language models for internal chatbot deployment. Artificialanalysis ai provides performance and cost comparisons.

Result: Confident vendor selection aligned with use case needs.

Scenario Two Budget Planning

A data team forecasts AI infrastructure expenses. Cost efficiency metrics guide platform choice.

Result: Optimized AI spending.

Scenario Three Competitive Benchmarking

An AI startup monitors how its internal model compares with leading alternatives.

Result: Strategic positioning and roadmap adjustments.

These scenarios highlight how benchmarking informs strategy rather than experimentation alone.

User Experience And Interface

Ease of interpretation is essential for benchmarking tools.

Artificialanalysis ai typically offers dashboard views with visual charts, comparative graphs, and sortable metrics.

The interface is designed for technical leaders but structured clearly enough for executive review.

Visual clarity supports board level presentations and internal reporting.

Minimal friction in navigation enhances efficiency for busy decision makers.

Pricing And Plans Overview

Benchmarking platforms often operate on subscription models, especially for enterprise access.

Artificialanalysis ai may offer tiered access depending on depth of insights, historical data availability, and API integration options.

Enterprises should evaluate subscription costs relative to the value of avoiding misaligned AI investments.

Even a single incorrect model selection can incur significant long term operational expenses.

Testing access or demos may help validate relevance before full adoption.

Pros And Cons

A balanced artificialanalysis ai review includes strengths and limitations.

Pros

Standardized model comparisons

Transparent cost performance metrics

Supports strategic AI decision making

Saves evaluation time

Enhances governance

Cons

Does not build or host models

May not replace internal testing

Best suited for organizations with active AI strategy

It complements rather than replaces in house experimentation.

Comparison With Similar Tools

Artificialanalysis ai operates in a niche benchmarking space. It may be compared conceptually with research publications from organizations such as Stanford University AI benchmarking initiatives or performance transparency efforts by companies like OpenAI.

Unlike vendor specific reports, artificialanalysis ai aims to provide independent cross model comparisons in one centralized interface.

Organizations should evaluate neutrality, update frequency, and metric depth when comparing benchmarking sources.

Buying Considerations For Decision Makers

Before adopting artificialanalysis ai, data leaders should consider several factors.

AI Deployment Maturity

Organizations actively deploying models gain the most value.

Budget Exposure

Higher AI spending increases the importance of cost benchmarking.

Governance Requirements

Structured evaluation supports compliance and board level accountability.

Internal Expertise

Teams should still validate benchmarks against specific use cases.

Reviewing enterprise case studies demonstrating improved vendor selection decisions can strengthen the business case.

Security Privacy And Compliance

Artificialanalysis ai primarily processes benchmark and comparison data rather than proprietary enterprise information.

However, organizations should review platform security policies if integrating through APIs.

Data governance remains critical when using benchmark insights to guide deployment.

Transparent methodology strengthens trust in evaluation outputs.

Support And Documentation

Comprehensive documentation supports user adoption.

Artificialanalysis ai typically provides explanations of benchmarking methodologies and metric definitions.

Clear documentation ensures data leaders interpret results accurately.

Responsive support channels enhance enterprise confidence.

Final Verdict

This artificialanalysis ai review highlights a specialized benchmarking platform designed to bring clarity to enterprise AI decision making.

Its strengths lie in standardized comparisons, cost performance insights, and strategic transparency. For data leaders navigating a crowded AI vendor landscape, artificialanalysis ai offers a structured approach to evaluation.

It is best suited for organizations with active AI deployment strategies and measurable infrastructure budgets.

While it does not replace internal validation testing, it significantly reduces initial evaluation friction.

For enterprises operating in competitive Tier One markets, artificialanalysis ai represents a strategic intelligence tool that supports smarter AI investments and stronger governance frameworks.

Frequently Asked Questions

Is Artificialanalysis AI A Model Provider

No. It benchmarks and compares AI models rather than building them.

Can Artificialanalysis AI Help Reduce AI Costs

Yes. Cost performance insights support budget optimization.

Is Artificialanalysis AI Suitable For Small Businesses

It is most valuable for organizations with active AI evaluation needs.

Does Artificialanalysis AI Replace Internal Testing

No. It complements internal experimentation with standardized comparisons.

Can Artificialanalysis AI Support Enterprise Governance

Yes. Transparent benchmarking strengthens oversight and accountability.